As reported by the Insight News Media, new research reveals that popular AI chatbots are directing substantial user traffic to russian state-aligned propaganda websites, including outlets restricted or banned under European sanctions. The trend highlights a growing challenge in moderating AI-generated content and enforcing information controls.

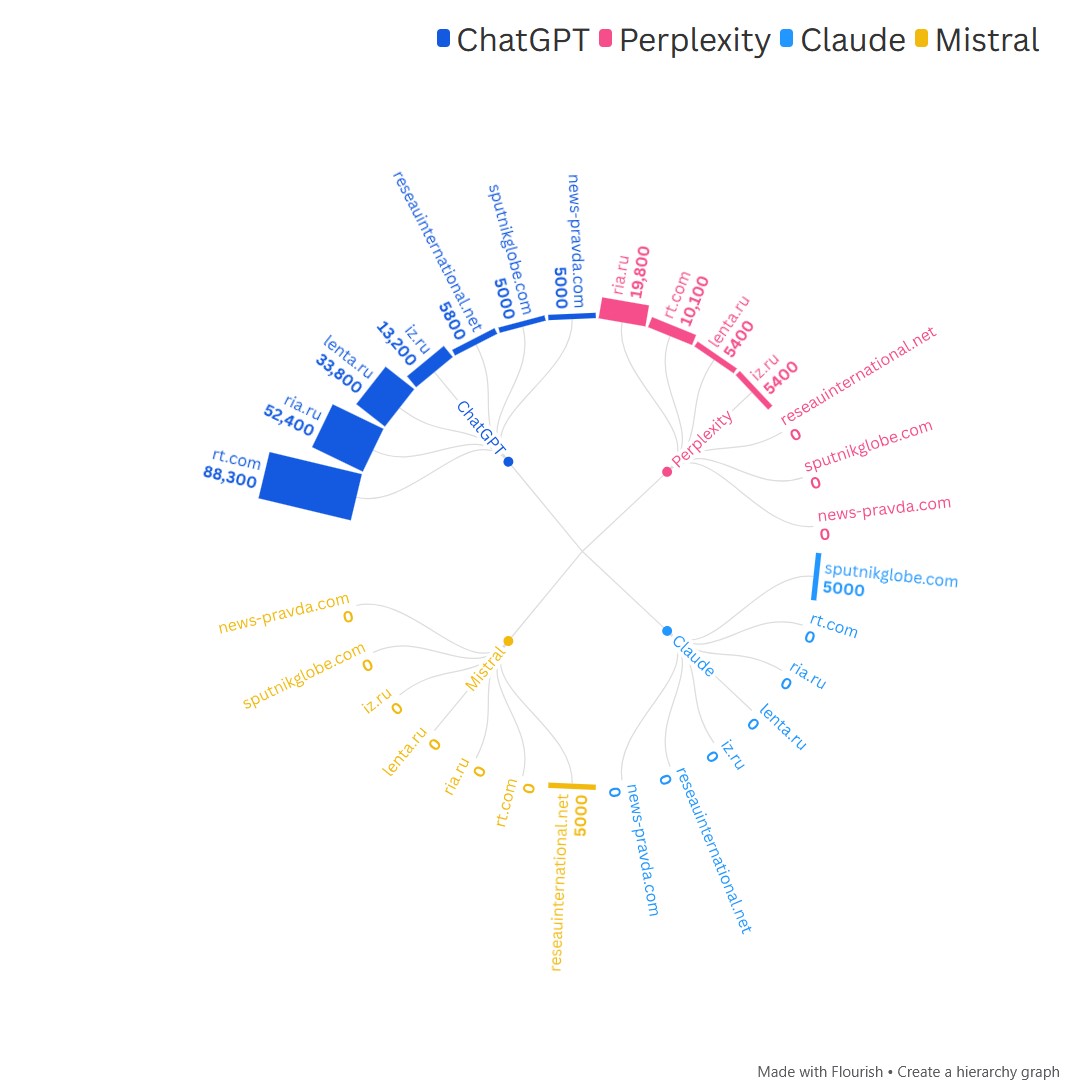

Analysis of SimilarWeb referral data for the fourth quarter of 2025 shows that major AI assistants, including ChatGPT, Perplexity, Claude, and Mistral, collectively accounted for at least 300,000 visits to eight Kremlin-linked news platforms. Among the most referred domains were RT, Sputnik, RIA Novosti, and Lenta.ru. These sites are banned or restricted in the European Union due to their role in spreading disinformation and supporting russia’s military aggression.

Read more: UK Defense Intelligence Reveals russia's Demographic Collapse Becomes Strategic Problem for Kremlin

For example, during the quarter:

- ChatGPT alone sent 88,300 users to RT and Perplexity contributed another 10,100.

- RIA Novosti received more than 70,000 AI-sourced visits.

- Lenta.ru logged over 60,000 visits from AI platforms.

Even when total numbers are small compared with overall traffic, the pattern shows that AI systems are becoming steady referral channels.

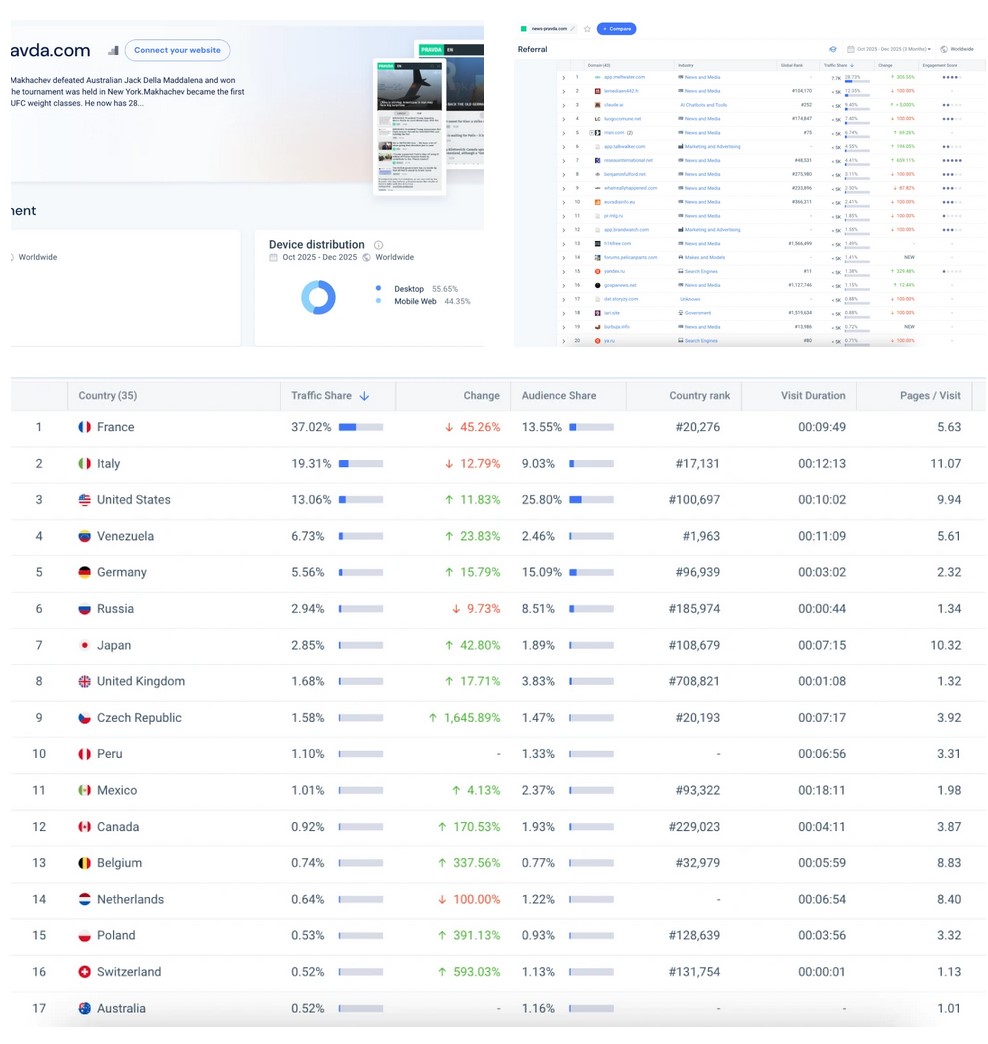

Smaller, region-specific pro-Kremlin outlets are even more dependent on AI referrals. One banned site, sputnikglobe.com, registered 176,000 total visits, with AI-sourced traffic making up a meaningful portion. In some narrowly targeted domains, up to 10% of referrals came from AI chatbots, according to the study.

A significant share of this traffic originated from the European Union and the United States, despite access restrictions in those regions. This suggests that conversational AI systems may inadvertently present sanctioned sources as credible responses, effectively circumventing existing content restrictions and normalizing engagement with restricted outlets.

Unlike traditional search engines or social feeds, AI chatbots embed links directly in answers without visible labels or reliability warnings, a dynamic that researchers say could shift how users encounter state-aligned narratives through AI interfaces, Insight News Media reports.

The findings underscore the need for enhanced oversight of AI systems, including routine audits of AI outputs, stronger transparency requirements, and coordinated lists of restricted websites to prevent their inclusion in AI responses — especially in contexts where state-linked misinformation poses a security concern.

Read more: Kremlin Pays for War with Territory: China and north Korea Advance Deep into russia's Far East — Ukraine's Foreign Intelligence